Prompt Engineering¶

Overview

A large part of working LLMs is knowing how to prompt them to get the information you want.

ChatGPTo will be used here but the techniques apply in general to any LLM.

Tip

With newer Reasoning models, there is less of a need for extensive prompts.

But this Prompt Guidance is still applicable.

Prompt Guides¶

There are many books, guides, and articles on Prompt Engineering. Some of the better ones are listed here:

- Prompt Engineering Guide

- OpenAI Prompt Engineering Guide

- Best practices for prompt Engineering with the OpenAI API

- Google Gemini Prompting guide 101 - A quick-start handbook for effective prompts, April 2024 edition

- How I Won Singapore’s GPT-4 Prompt Engineering Competition, Dec 2023

- Prompt Engineering for Generative AI Book, May 2024

- Google Prompt design strategies

- Anthropic Prompt Engineering overview

- Best Prompt Techniques for Best LLM Responses, Feb 2024

Prompt Taxonomy¶

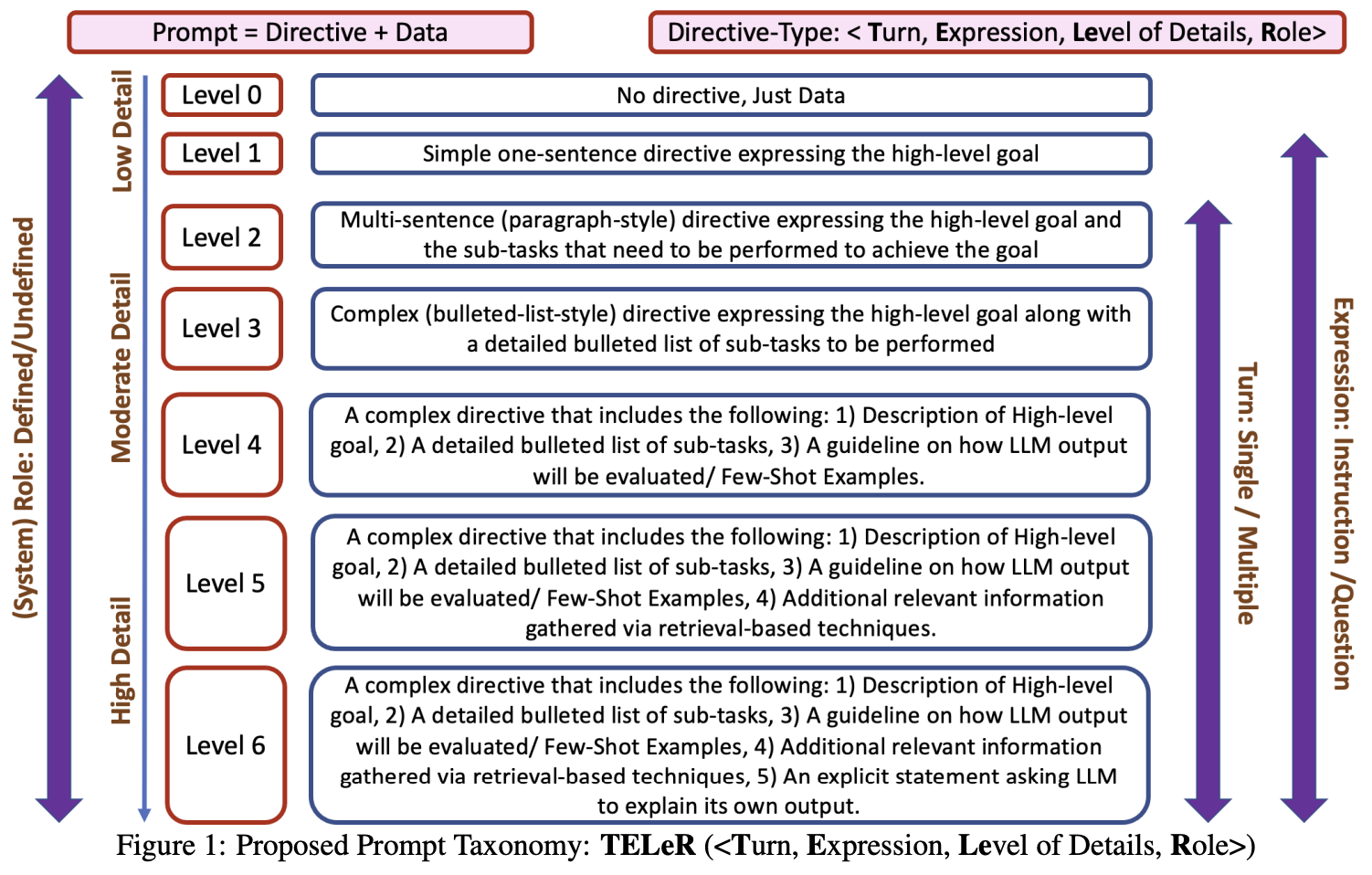

Prompt Taxonomy Turn, Expression, Level of Details, Role¶

The above proposed Prompt Taxonomy is TELeR: Turn, Expression, Level of Details, Role from TELeR: A General Taxonomy of LLM Prompts for Benchmarking Complex Tasks

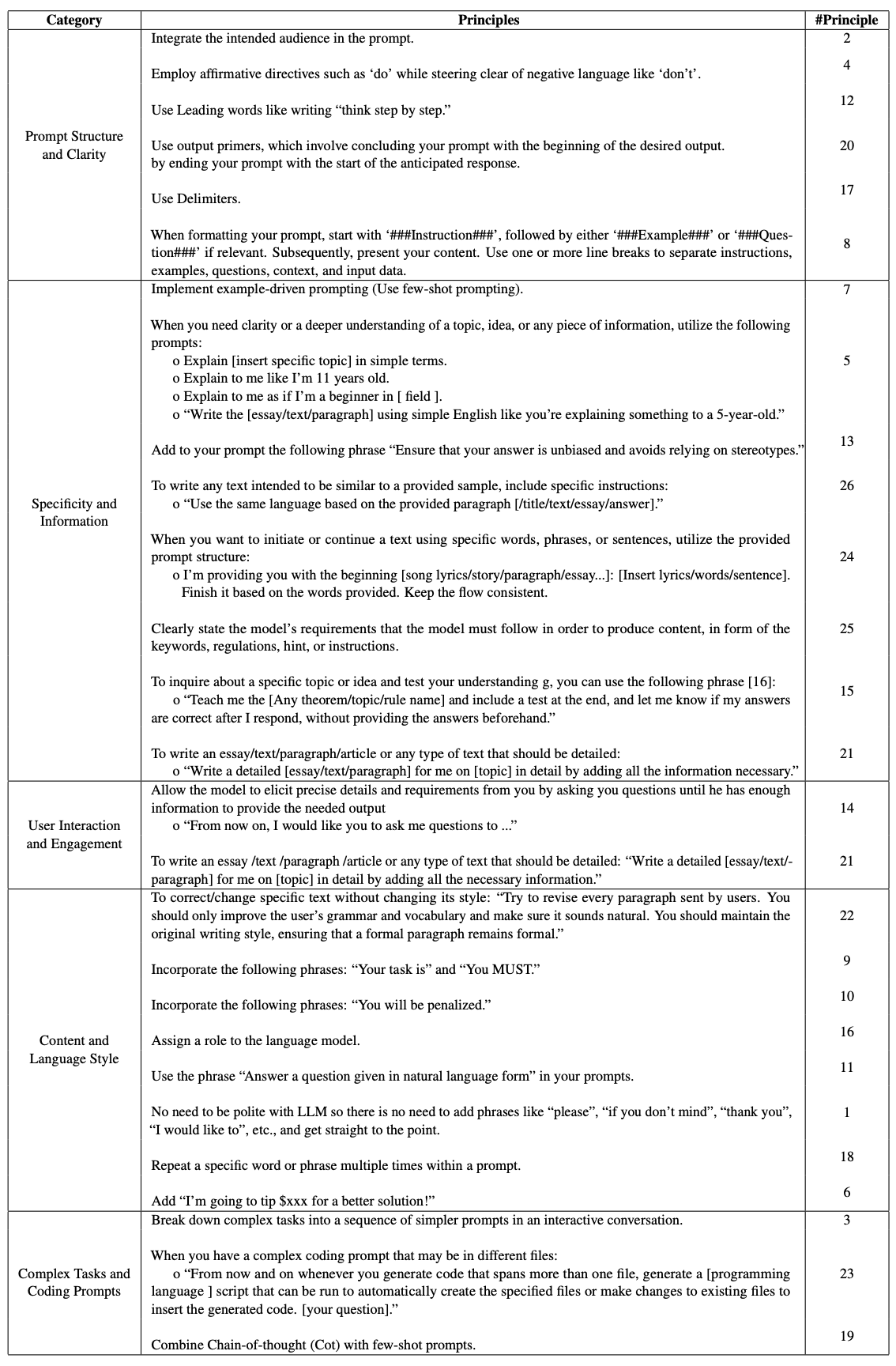

Prompt Taxonomy 26 Guiding Principles¶

The above "26 guiding principles designed to streamline the process of querying and prompting large language models" is from Principled Instructions Are All You Need for Questioning LLaMA-1/2, GPT-3.5/4

Prompt Tactics¶

Some tactics I find particularly useful:

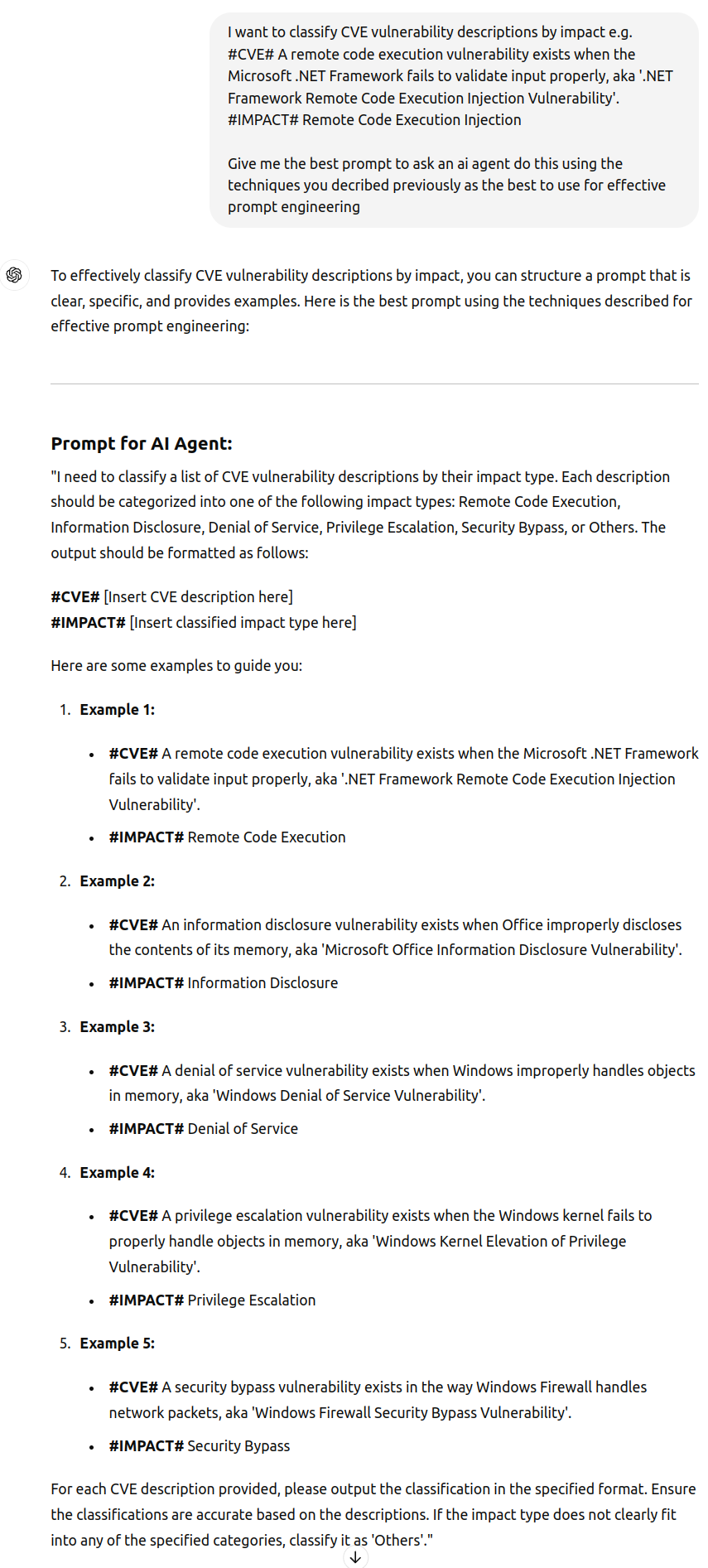

- Ask the LLM to generate the prompt.

- Do this in 2 parts (task decomposition)

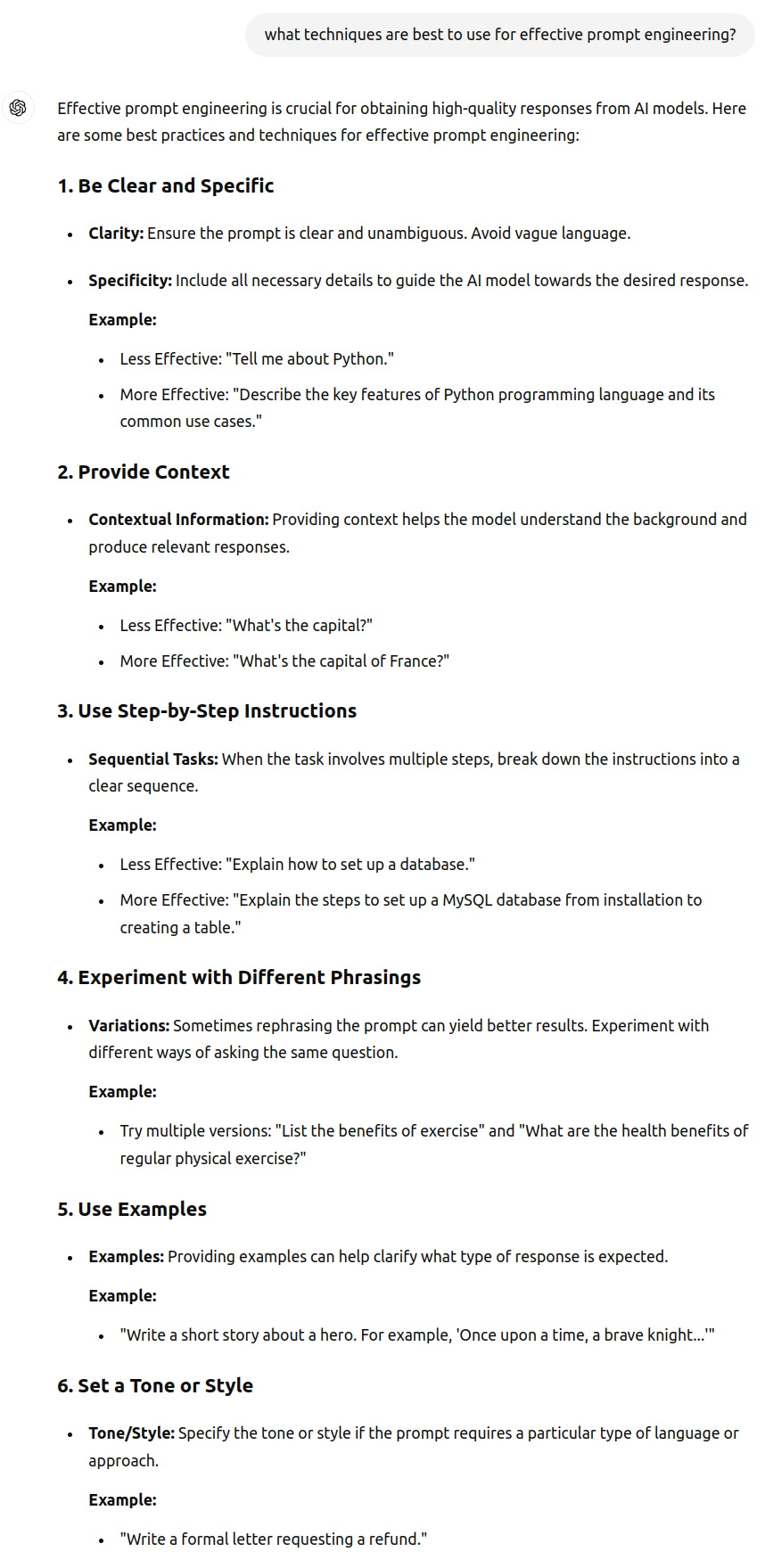

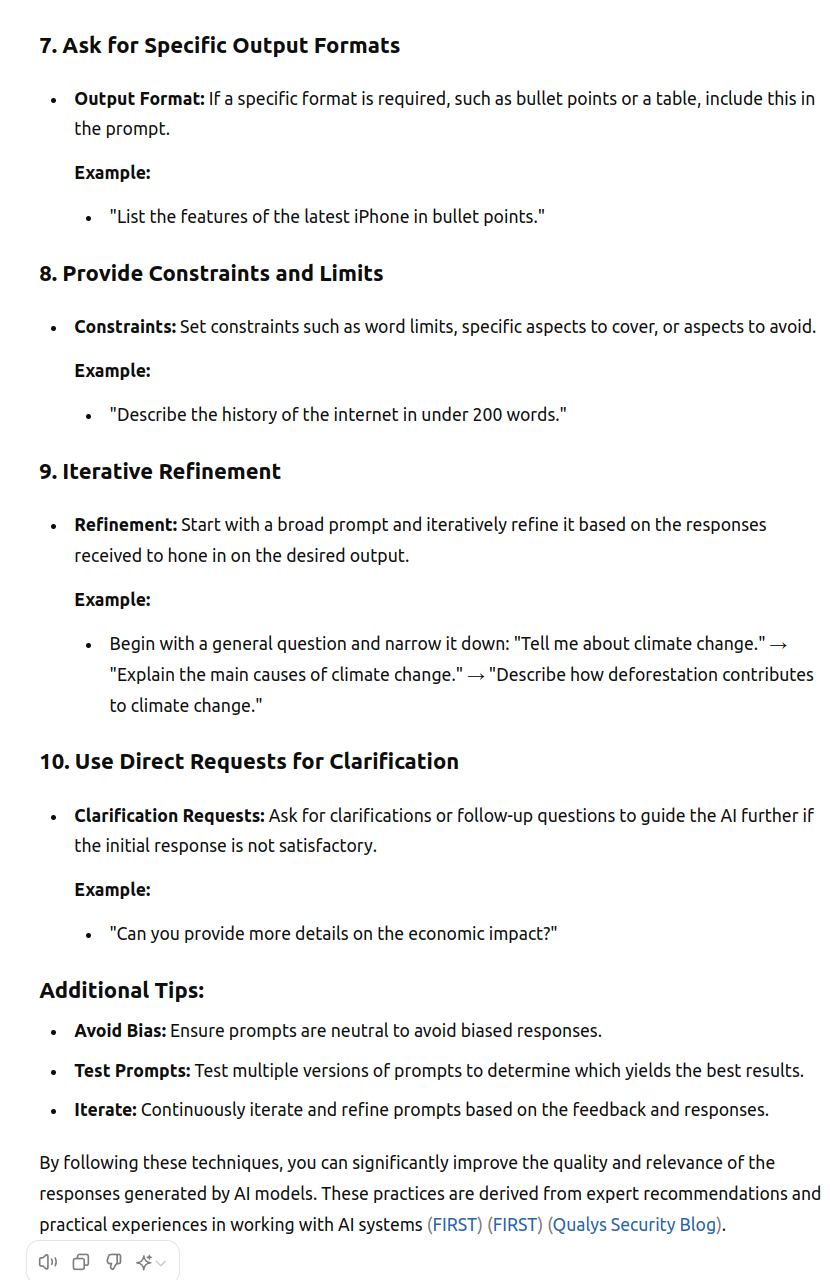

- Ask the LLM what techniques are best to use for effective prompt engineering

- Ask the LLM to create a prompt using these techniques for the objective and example you specify

- Do this in 2 parts (task decomposition)

- Ask the LLM to provide a confidence score for their answer.

- People can be fooled by the fluency of LLMs aka hallucinations

- LLMs know how confident they are in their answer.

- So asking an LLM to rate their confidence in the answer can reduce blind trust.

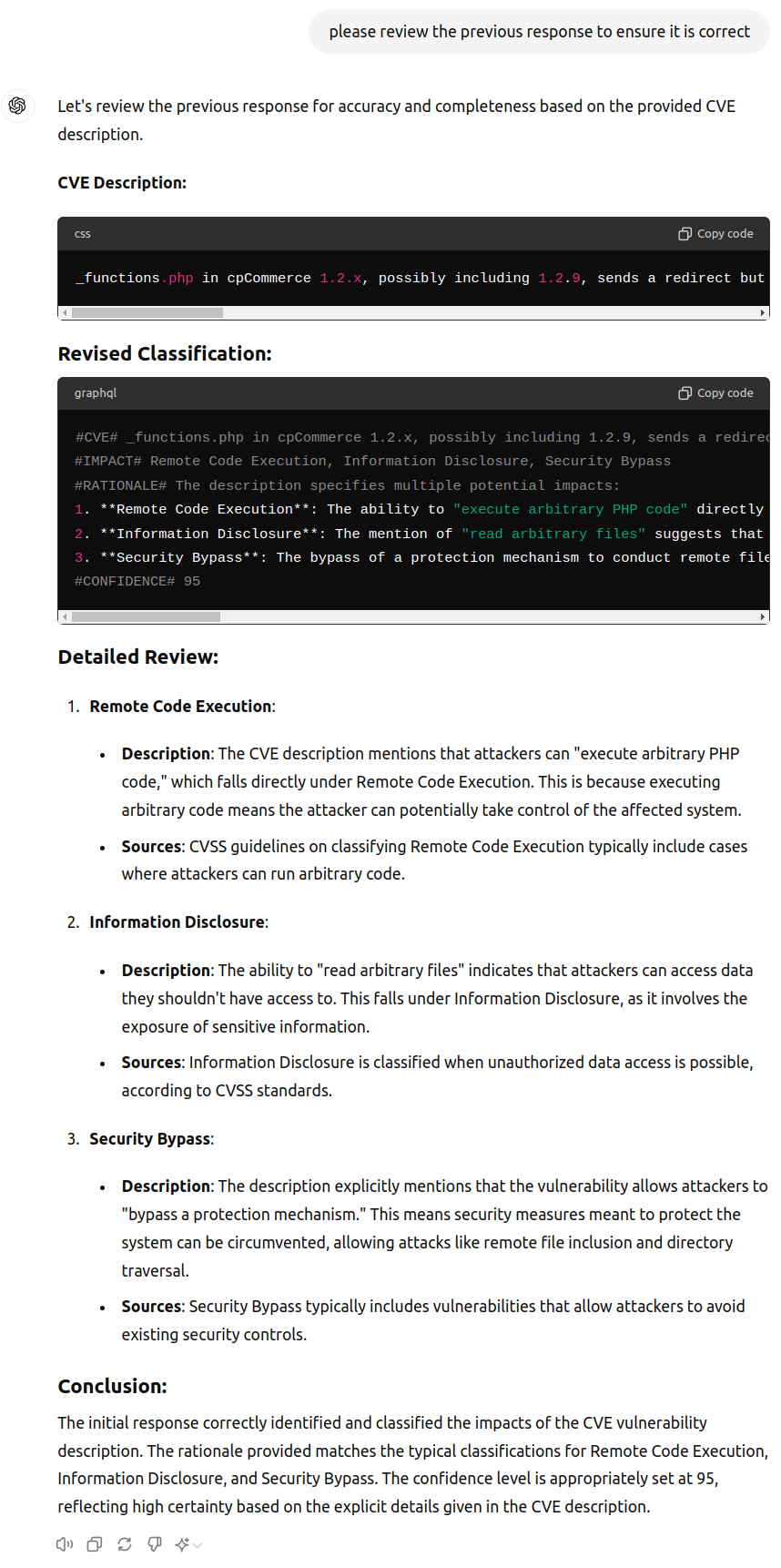

- Ask the LLM (or a different LLM) to review the answer and provide improvements.

Ask the LLM to generate the prompt¶

Ask the LLM what techniques are best to use for effective prompt engineering?¶

Ask the LLM to create a prompt using these techniques for the objective and example you specify¶

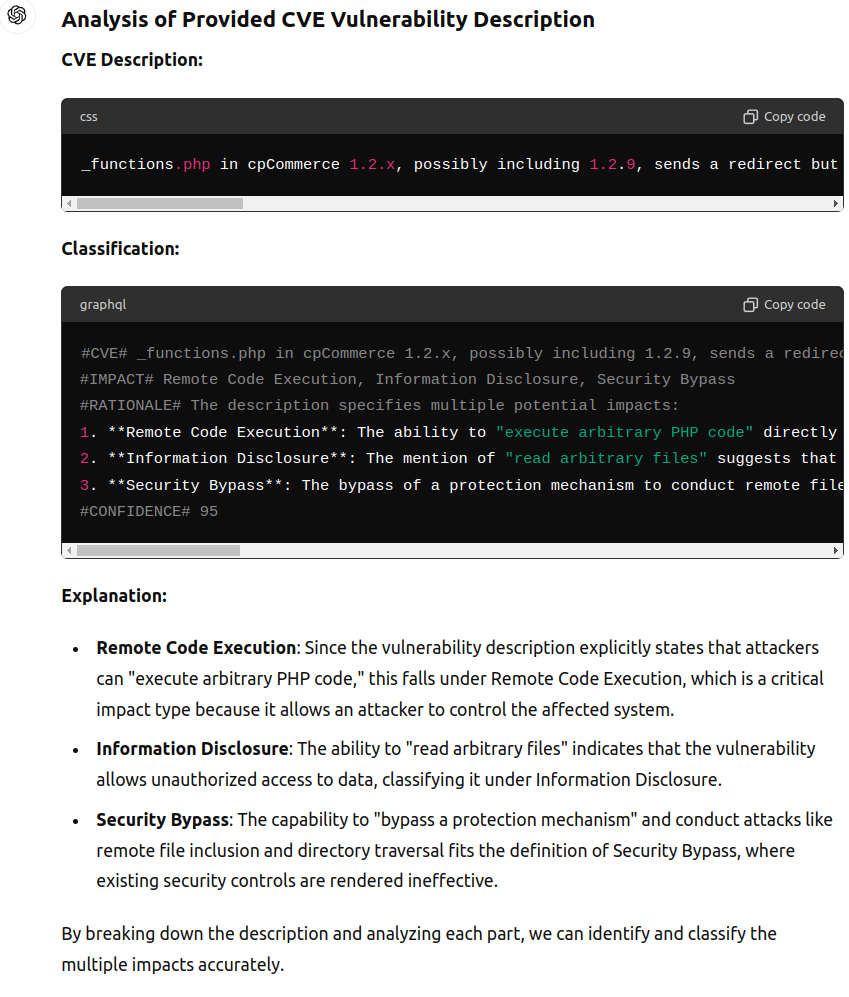

_functions.php in cpCommerce 1.2.x, possibly including 1.2.9, sends a redirect but does not exit when it is called directly, which allows remote attackers to bypass a protection mechanism to conduct remote file inclusion and directory traversal attacks, execute arbitrary PHP code, or read arbitrary files via the GLOBALS[prefix] parameter, a different vector than CVE-2003-1500.

#CVE# _functions.php in cpCommerce 1.2.x, possibly including 1.2.9, sends a redirect but does not exit when it is called directly, which allows remote attackers to bypass a protection mechanism to conduct remote file inclusion and directory traversal attacks, execute arbitrary PHP code, or read arbitrary files via the GLOBALS[prefix] parameter, a different vector than CVE-2003-1500.

#IMPACT# Remote Code Execution, Information Disclosure, Security Bypass

#RATIONALE# The description specifies multiple potential impacts:

1. **Remote Code Execution**: The ability to "execute arbitrary PHP code" directly implies a high severity impact where an attacker can run malicious code on the affected system.

2. **Information Disclosure**: The mention of "read arbitrary files" suggests that attackers can access sensitive information stored in files.

3. **Security Bypass**: The bypass of a protection mechanism to conduct remote file inclusion and directory traversal attacks implies that security controls are being circumvented.

#CONFIDENCE# 95

Ask the LLM to review the answer and provide improvements.¶

LLM Tools to Improve Prompts¶

In addition to using LLMs to generate prompts (aka Meta Prompts), and review prompts in an adhoc manner via the chat interface, there are various tools that use LLMs to improve prompts:

- Fabric Prompt to Improve Prompts

- Anthropic Console supports testing and improving prompts (for Anthropic - but likely the resulting prompt would be portable to other LLMs)

- Claude can generate prompts, create test variables, and show you the outputs of prompts side by side.

- https://x.com/AnthropicAI/status/1810747792807342395

- Google Prompt Gallery

Prompts used in various Code Generation Tools¶

https://github.com/x1xhlol/system-prompts-and-models-of-ai-tools

FULL v0, Cursor, Manus, Same.dev, Lovable, Devin, Replit Agent, Windsurf Agent & VSCode Agent (And other Open Sourced) System Prompts, Tools & AI Models.

These are useful as reference examples.

Takeaways

- Getting the right prompt to get what you want out of an LLM can sometimes feel like art or interrogation. There are several options covered here:

- Prompt Templates

- Prompt Frameworks

- Ask an LLM to generate a prompt

- LLM-based tools for prompt refinement